Back to the future - preparing the ground for agentic engineering

Agentic coding is rewriting the rules of software delivery, but can organisations really expect 10x gains simply by applying it to existing processes? How should they adopt agentic engineering - what needs to change, and what can stay the same?

Over the past 18 months, agentic coding has completely upended how software is built. The shift has been seismic, but it hasn't necessarily translated into teams building better software faster. Despite all the progress, it’s become clear that AI transformation was never simply a matter of introducing a bunch of new tools and expecting magic to happen.

Rather, at its core, adoption is an organisational change problem - one that requires careful navigation through the classic adoption bell curve: early adopters paving the way, a tail of sceptics, determinedly resisting change, and a frozen middle, waiting to see which way the wind will blow.

The key to change management is understanding that we all respond to change in different ways. Different mindsets suit different stages of the journey, and knowing how to bring each group along the path is critical to success. It’s a well-trodden playbook:

Empower your champions. They are your change agents. Their job is to build a consensus that feels considered, practical, exciting, achievable and necessary - backed by a strong internal community, a culture of continuous learning, and clear evidence that the new ways are better.

Listen to the sceptics, then move on. They are resistant for a reason - experience, identity or genuine concern. Whatever the cause, don’t dismiss or brush their views aside - they may be deeply held and personal. They will need to feel heard, so listen. Address what you can, then move on. Whatever you do, don’t let any negativity fester, as it will drag the whole team down. Once won over, sceptics can become your greatest advocates.

Be patient with the fence-sitters, at least initially. They need to feel the weight of momentum before they move, so build it - continually highlight wins, show that the benefits are real, and provide all the necessary training and support they need. Don’t expect immediate change, but it will come. For a few stragglers, consensus may need to give way to mandate.

Driving change on shifting sands

But what makes AI adoption so hard is the sheer pace of the change. And the uncertainty. Can anyone predict where we will be 3 weeks from now, let alone a year? Can you really afford to take bets when the landscape is so fluid? How do you sound credible as a leader when you’re not entirely sure where you’re leading everyone to? Unrealistic expectations and lack of clarity only feed scepticism (and worse, cynicism).

Equally, the hype and noise from the bleeding edge can be overwhelming. It creates the impression that the old ways should be completely discarded and leaves teams feeling unsure what to follow, what to ignore, and what actually matters. This is especially problematic for organisations building serious, durable software, not vibe coding the latest demo.

The reality is, we’re all still trying to figure this out - the patterns, the practices, the limits of what the technology can do. As a company, you need to be honest about this - none of us can predict the future, we’re headed in this general direction, and we’ll tack and course correct as we go along.

Your teams will appreciate the candour. No one has all the answers. At the same time, they need to understand that the rules of the game have changed irrevocably. They either get with it or get left behind. Yes, roles are blurring, boundaries are being pushed, everything is up in the air, but this is a natural part of ‘figuring it out’. It will be years before the new best practices bed down.

Wheels within wheels

When you strip it all back - no matter where things end up or how good the technology gets - there are only 2 questions that need to be answered about AI adoption:

Who's in control? Have we really reached the point where we’re mere onlookers to a bunch of autonomous agents stuck in a Ralph Wiggum loop, merrily programming away, or is AI simply a tool in our human-centred workflows? Who owns what end-to-end?

What’s the impact? How does AI impact the software development lifecycle? What has changed and what remains the same?

The answer to the second will depend greatly on the answer to the first. The more you are willing to throw at the machine, the more it will challenge traditional assumptions about the software delivery lifecycle, including roles, responsibilities, testing, automation, quality gates, etc.

In practice, the question of who owns what does not have a single correct answer. It will depend on your context, emerging practices, further technological progress, and on the fundamentals that have always been true of good software engineering. Most of all, it depends on risk.

New tools, same rules?

To help frame the answer, consider what it takes to build software responsibly in the age of AI. These are not new ideas, but they are rarely applied consistently across teams (even without AI):

AI does not fix a broken process. There’s a reason why high-performing teams work better with AI - they work better without it. They are optimised for flow, feedback and learning. You will get far greater gains from fixing your people and process issues than you will get from throwing AI over a dysfunctional team. This includes addressing the latent bottlenecks and inadequate quality gates that have been hiding in plain sight (likely drowned out by the noise of coding) in so many underperforming teams.

Coding has been reduced to toil, mostly. Human history is, in many ways, the story of how technology has steadily helped automate our most tedious work. Coding might once have been an unavoidable part of the job, but it was never the most valuable part – that was deciding what to type. So, just let the LLM get on with what it's good at, and focus your attentions on higher-order tasks like thinking, planning, designing, reviewing, refactoring, etc.

Small, capable teams get more done. A lifecycle organised around silos of specifiers (BAs), validators (QA), and implementors (developers) requires more ceremony, more communication channels, more handoffs, and more headcount. As a result, ownership falls between the lines, and things get lost in translation, resulting in longer lead times and more rework. Small teams of capable people have always accomplished more than a meticulous process filled with average individuals.

Capable people think beyond their lane. Individuals can no longer hide behind the 'complexity' of coding. The old premise of "where there is mystery, there’s job protection" has gone, and with it, developers must become more T-shaped. Folks who have never stepped outside their React or Spring lane are going to struggle in this new world. The best engineers have always kept an eye on the big picture, on the why, and on what success looks like for the business and its users. This is their time.

Ownership drives motivation. When you only own part of the problem (the code), you worry less about building the right thing – someone else has already made that decision – and focus more on just meeting the specification, not on the wider impact of your changes. Do they deliver the desired business outcomes? Can I challenge or suggest a better way? True ownership and accountability comes from owning a problem from concept to delivery.

You can’t outsource accountability. Our attitude to risk is shaped by how likely something is to go wrong and what happens when it does. The greater the potential fallout, the less tolerant we become of risk - we are more accepting of risk when the stakes are low; we demand near certainty and predictability when they are high. Put it this way: would you fly that plane if its software had been built entirely by a swarm of agents? When something goes wrong, we need to be able to stand by our choices.

Throughput must be constrained by understanding. There’s a temptation to believe that LLMs have become so good that they can be both judge and arbiter of their own work - that code is now so cheap, quality matters less, and the machine will do it all. But if human accountability is to remain a thing, and it must, then we must continue to understand what we’re building. In systems of any importance, work cannot be allowed to flow faster than we can reason about it. Even at this constrained pace, we’re still moving much faster than when every line of code was written by hand.

Good design makes AI better. Clear structure and clean code have always made software easier to understand, test and change. Our ability to reason about systems enables us to stand over them. This has not changed. If anything, it matters more. LLMs perform best with clearly partitioned, well-organised systems characterised by strong contractual boundaries and clean code. Left to their own devices, they will quickly drift off course, so a human hand must remain at the tiller.

Quality drives productivity. The AI sales pitch is almost entirely about productivity. However, the smartest teams are using AI to raise the bar first, knowing that faster and better will come as a result. Adoption cannot be at the expense of stability or increased rework, so embed sensible (DORA) metrics into your delivery to help identify and address issues quickly. And beware the unintended consequences of measuring the wrong thing i.e. Goodhart’s Law. Lines of code generated, anyone?

AI does not replace good engineering. Practices like working in small batches, automated testing, fast CI cycles, and rapid feedback remain essential, as do human-centric activities that maintain quality and reduce cognitive debt - pairing, reviewing and continuous refactoring. Modern engineering practices were never optional; now they’re non-negotiable.

Testing first clarifies intent. Writing tests before code forces engineers to think in terms of specific outcomes and behaviours before diving into implementation. Tests provide a shared contract of expectations between human and machine. This is critical when working with systems that can generate large volumes of code quickly. AI is hugely powerful, but it must be guided, constrained and controlled.

Mileages will vary. Not all software deserves the same level of rigour. Disposable solutions that solve specific, localised problems do not need to be scrutinised to the nth degree - AI with minimal oversight is your ideal partner. But somewhere along the continuum between throwaway and safety-critical, there’s an inflexion point where humans must retain control. It is a lot closer to the disposable end of the spectrum than you may think.

No more hiding

When we are asked why our teams are so good with AI, it boils down to one point – they are excellent without it. AI hasn’t changed the laws of physics. The difference is the technology is pushing us back towards smaller, more capable teams, whilst also amplifying what has always been true – attitude, behaviours, discipline and good old engineering fundamentals optimised for flow, feedback and learning are what help teams succeed.

Equally, folks who have until recently been able to hide behind poor processes and the expense of coding have become more exposed. The days of ticket engineering and process theatre are gone. These types of people will, frankly, struggle in the new order (of smaller, more capable teams).

Where the rubber hits the road

When charting your adoption course, you must first take a deep look at your current ways of working. Are you locked in a world of silos and handoffs, with high levels of performative ceremony? Or are you geared for smaller, more capable teams with people who are comfortable in the grey and can own a problem from concept to delivery?

Fix the system before you fix the tools.

At Instil, agentic engineering has transformed how we build and deliver software. Like many organisations, we've been through the classic adoption bell curve and come out the other side. The change was driven by our internal champions - mostly our most experienced engineers, but also some of our most junior - who led the shift while ensuring that quality engineering and human accountability remained at the heart of our approach.

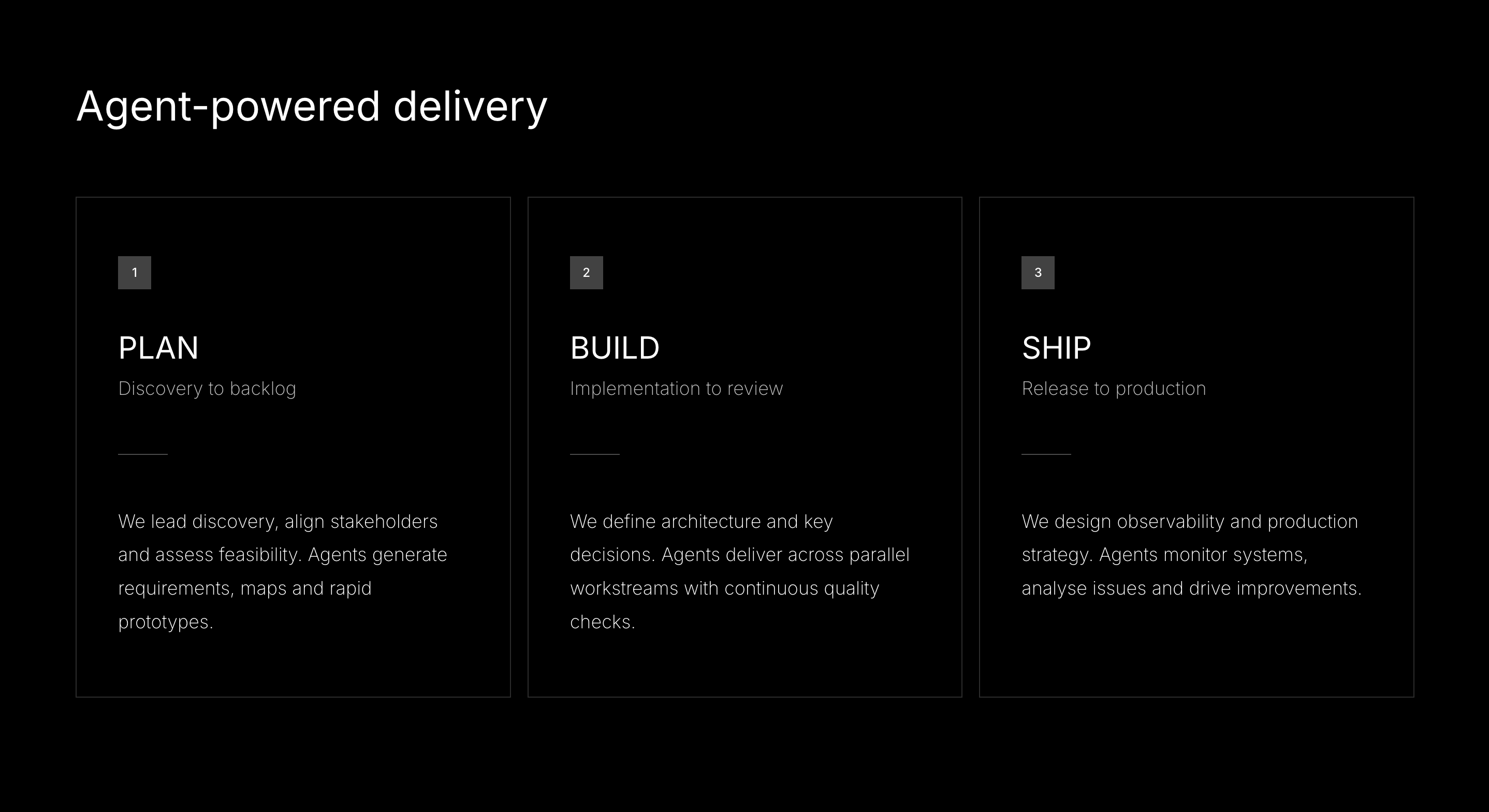

That experience has been distilled into our agentic SDLC – a living document that captures how we expect our teams to use the technology. It is as much a collection of values as it is a set of working practices. It begins with clarity on who is responsible for what, and then sets out which activities sit on each side of the human–machine divide:

Instillers own the problem space, the architecture and the judgement calls. Agents accelerate everything in between.

Pragmatism over dogma

Our approach is underpinned by hard-won experience and pragmatism, not the latest whisperings of the internet. We recognise what is happening at the bleeding edge, but we're not distracted by it:

We apply the right approach for the right problem. Effective use of agentic tooling means directing it, not deferring to it. Not every task suits complete delegation. The instinct for what to hand off and what to retain comes from working with agents across real projects. Knowing what good looks like and when something is done sits firmly with us. Some tasks suit full agent delegation; others need hands-on work. Most sit somewhere in between, and knowing which is which is an engineering skill that compounds with experience.

We build continuous learning in. The landscape is shifting quickly. Teams that work with agents daily build practical knowledge that no course can teach, such as when to delegate, when to direct, and when to intervene. That learning is supported through pairing, mentoring, communities of practice, and structured training. But the deeper understanding comes from applying it in practice.

We tailor accordingly. How agents operate varies by engagement. IP ownership, compliance, audit and data controls are defined by our clients. We tailor our delivery approach and tooling to meet their needs.

Final thoughts

In short, you cannot sprinkle AI over existing teams and processes and expect everything to get 10 times better. Applied to bad teams and broken processes, it could make things 10 times worse. First, fix your processes. Then address the skills and behaviour gaps in your people.

If you’re kick-starting your agentic coding journey and need advice, or are further along and struggling to realise the promised gains, then get in touch. Our teams will be very happy to walk you through how they’re using AI to accelerate delivery, improve quality and solve business-critical challenges.

It starts with building high-performing teams.